For years, dedicated Star Trek fans have been using AI in an attempt to make a version of the acclaimed series Deep Space 9 that looks decent on modern TVs. It sounds a bit ridiculous, but I was surprised to find that it’s actually quite good — certainly good enough that media companies ought to pay attention (instead of just sending me copyright strikes).

I was inspired earlier this year to watch the show, a fan favorite that I occasionally saw on TV when it aired but never really thought twice about. After seeing Star Trek: The Next Generation’s revelatory remaster, I felt I ought to revisit its less galaxy-trotting, more ensemble-focused sibling. Perhaps, I thought, it was in the middle of an extensive remastering process as well. Nope!

Sadly, I was to find out that, although the TNG remaster was a huge triumph technically, the timing coincided with the rise of streaming services, meaning the expensive Blu-ray set sold poorly. The process cost more than $10 million, and if it didn’t pay off for the franchise’s most reliably popular series, there’s no way the powers that be do it again for DS9, well-loved but far less bankable.

What this means is that if you want to watch DS9 (or Voyager for that matter), you have to watch it more or less at the quality in which it was broadcast back in the ’90s. Like TNG, it was shot on film but converted to video tape at approximately 480p resolution. And although the DVDs provided better image quality than the broadcasts (due to things like pulldown and color depth) they were still, ultimately, limited by the format in which the show was finished.

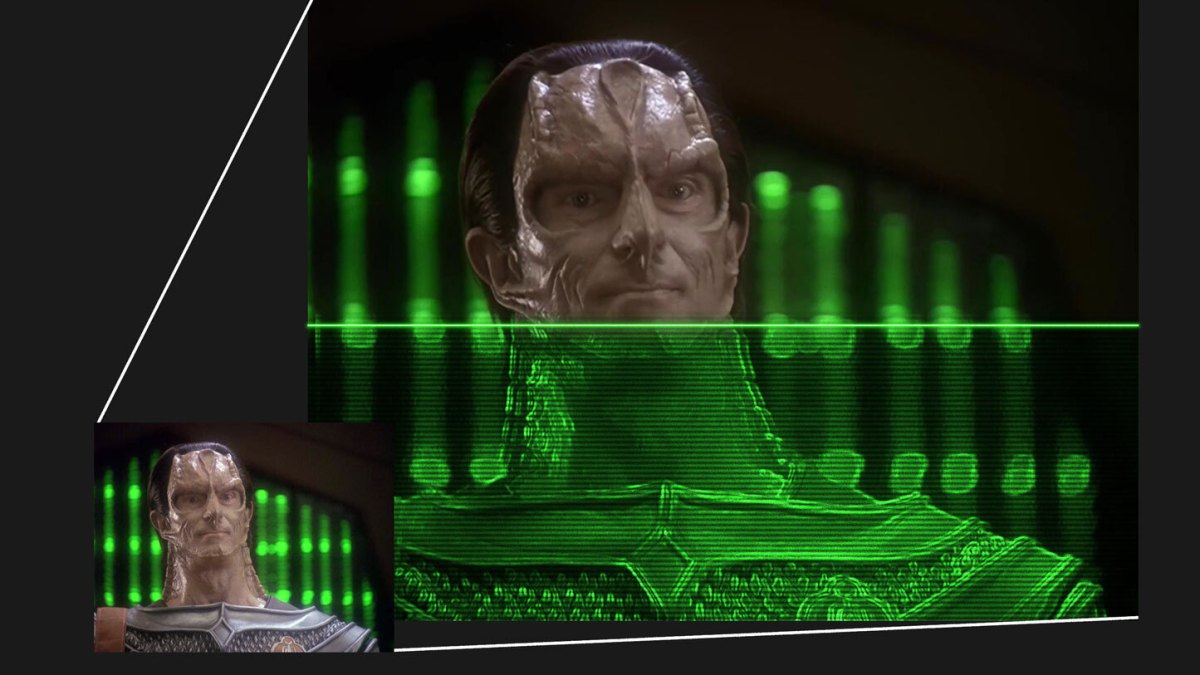

Not great, right? And this is about as good as it gets, especially early on. Image credits: Paramount

For TNG, they went back to the original negatives and basically re-edited the entire show, redoing effects and compositing, involving great cost and effort. Perhaps that may happen in the 25th century for DS9, but at present there are no plans, and even if they announced it tomorrow, years would pass before it came out.

So: as a would-be DS9 watcher, spoiled by the gorgeous TNG rescan, and who dislikes the idea of a shabby NTSC broadcast image being shown on my lovely 4K screen, where does that leave me? As it turns out: not alone.

To boldly upscale…

For years, fans of shows and movies left behind by the HD train have worked surreptitiously to find and distribute better versions than what is made officially available. The most famous example is the original Star Wars trilogy, which was irreversibly compromised by George Lucas during the official remaster process, leading fans to find alternative sources for certain scenes: laserdiscs, limited editions, promotional media, forgotten archival reels, and so on. These totally unofficial editions are a constant work in progress, and in recent years have begun to implement new AI-based tools as well.

These tools are largely about intelligent upscaling and denoising, the latter of which is of more concern in the Star Wars world, where some of the original film footage is incredibly grainy or degraded. But you might think that upscaling, making an image bigger, is a relatively simple process — why get AI involved?

Certainly there are simple ways to upscale, or convert a video’s resolution to a higher one. This is done automatically when you have a 720p signal going to a 4K TV, for instance. The 1280×720 resolution image doesn’t appear all tiny in the center of the 3840×2160 display — it gets stretched by a factor of 3 in each direction so that it fits the screen; but while the image appears bigger, it’s still 720p in resolution and detail.

A simple, fast algorithm like bilinear filtering makes a smaller image palatable on a big screen even when it is not an exact 2x or 3x stretch, and there are some scaling methods that work better with some media (for instance animation, or pixel art). But overall you might fairly conclude that there isn’t much to be gained by a more intensive process.

And that’s true to an extent, until you start down the nearly bottomless rabbit hole of creating an improved upscaling process that actually adds detail. But how can you “add” detail that the image doesn’t already contain? Well, it does contain it — or rather, imply it.

Here’s a very simple example. Imagine a old TV showing an image of a green circle on a background that fades from blue to red (I used this CRT filter for a basic mockup).

You can see it’s a circle, of course, but if you were to look closely it’s actually quite fuzzy where the circle and background meet, right, and stepped in the color gradient? It’s limited by the resolution and by the video codec and broadcast method, not to mention the sub-pixel layout and phosphors of an old TV.

But if I asked you to recreate that image in high resolution and color, you could actually do so with better quality than you’d ever seen it, crisper and with smoother colors. How? Because there is more information implicit in the image than simply what you see. If you’re reasonably sure what was there before those details were lost when it was encoded, you can put them back, like so:

There’s a lot more detail carried in the image that just isn’t obviously visible — so really, we aren’t adding but recovering it. In this example I’ve made the change extreme for effect (it’s rather jarring, in fact), but in photographic imagery it’s usually much less stark.

Intelligent embiggening

The above is a very simple example of recovering detail, and it’s actually something that’s been done systematically for years in restoration efforts across numerous fields, digital and analog. But while you can see it’s possible to create an image with more apparent detail than the original, you also see that it’s only possible because of a certain level of understanding or intelligence about that image. A simple mathematical formula can’t do it. Fortunately, we are well beyond the days when a simple mathematical formula is our only means to improve image quality.

From open source tools to branded ones from Adobe and Nvidia, upscaling software has become much more mainstream as graphics cards capable of doing the complex calculations necessary to do them have proliferated. The need to gracefully upgrade a clip or screenshot from low resolution to high is commonplace these days across dozens of industries and contexts.

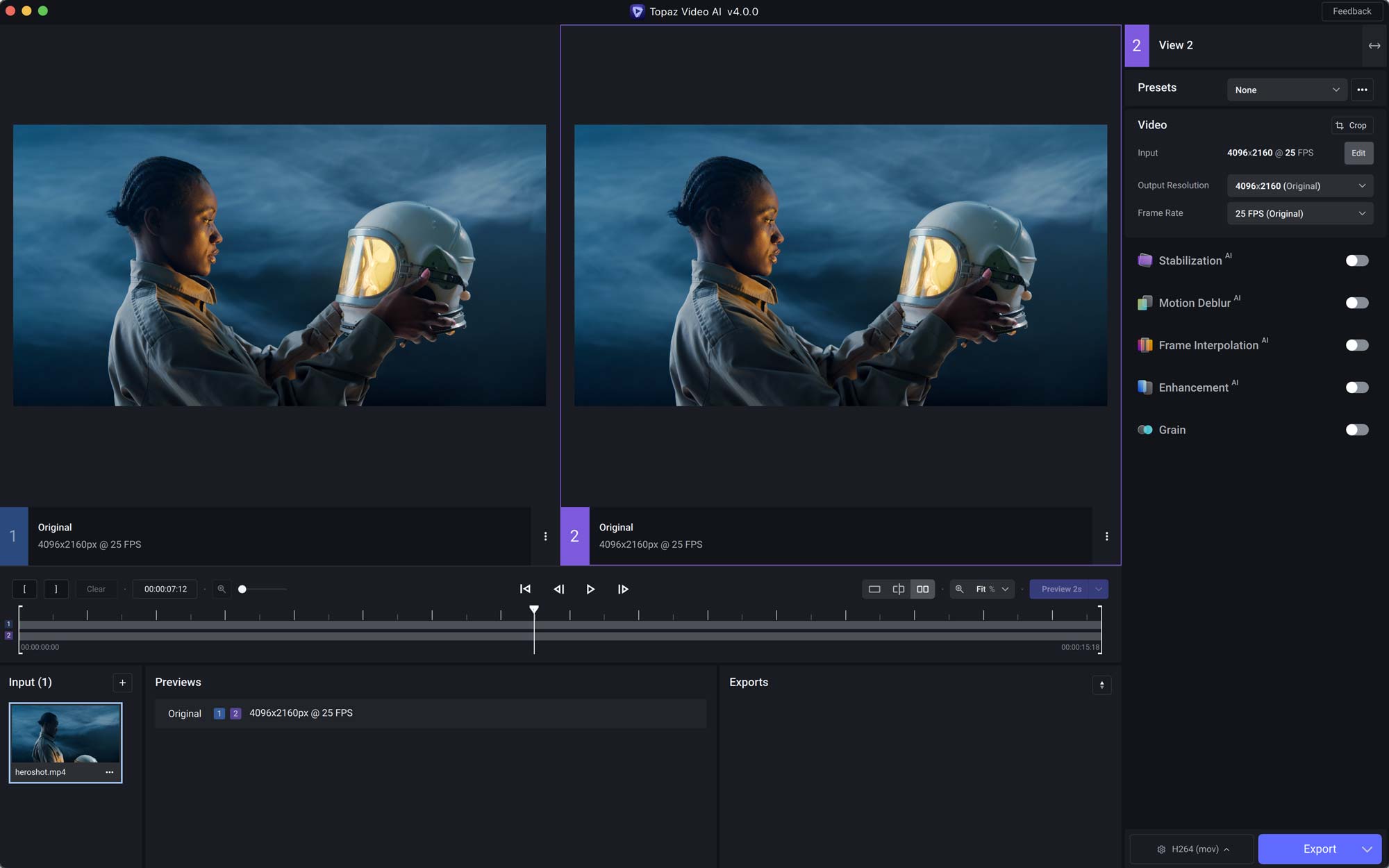

Video effects suites now incorporate complex image analysis and context-sensitive algorithms, so that for instance skin or hair is treated differently than the surface of water or the hull of a starship. Each parameter and algorithm can be adjusted and tweaked individually depending on the user’s need or the imagery being upscaled. Among the most used options is Topaz, a suite of video processing tools that employ machine learning techniques.

Image Credits: Topaz AI

The trouble with these tools is twofold. First, the intelligence only goes so far: settings that might be perfect for a scene in space are totally unsuitable for an interior scene, or a jungle or boxing match. In fact even multiple shots within one scene may require different approaches: different angles, features, hair types, lighting. Finding and locking in those Goldilocks settings is a lot of work.

Second, these algorithms aren’t cheap or (especially when it comes to open source tools) easy. You don’t just pay for a Topaz license — you have to run it on something, and every image you put through it uses a non-trivial amount of computing power. Calculating the various parameters for a single frame might take a few seconds, and when you consider there are 30 frames per second for 45 minutes per episode, suddenly you’re running your $1,000 GPU at its limit for hours and hours at a time — perhaps to just throw away the results when you find a better combination of settings a little later. Or maybe you pay for calculating in the cloud, and now your hobby has another monthly fee.

Fortunately, there are people like Joel Hruska, for whom this painstaking, costly process is a passion project.

“I tried to watch the show on Netflix,” he told me in an interview. “It was abominable.”

Like me and many (but not that many) others, he eagerly anticipated an official remaster of this show, the way Star Wars fans expected a comprehensive remaster of the original Star Wars trilogy theatrical cut. Neither community got what they wanted.

“I’ve been waiting 10 years for Paramount to do it, and they haven’t,” he said. So he joined with the other, increasingly well equipped fans who were taking matters into their own hands.

Time, terabytes, and taste

Hruska has documented his work in a series of posts on ExtremeTech, and is always careful to explain that he is doing this for his own satisfaction and not to make money or release publicly. Indeed, it’s hard to imagine even a professional VFX artist going to the lengths Hruska has to explore the capabilities of AI upscaling and applying it to this show in particular.

“This isn’t a boast, but I’m not going to lie,” he began. “I have worked on this sometimes for 40-60 hours a week. I have encoded the episode ‘Sacrifice of Angels’ over 9,000 times. I did 120 Handbrake encodes — I tested every single adjustable parameter to see what the results would be. I’ve had to dedicate 3.5 terabytes to individual episodes, just for the intermediate files. I have brute-forced this to an enormous degree… and I have failed so many times.”

He showed me one episode he’d encoded that truly looked like it had been properly remastered by a team of experts — not to the point where you think it was shot in 4K and HDR, but just so you aren’t constantly thinking “my god, did TV really look like this?” all the time.

“I can create an episode of DS9 that looks like it was filmed in early 720p. If you watch it from 7-8 feet back, it looks pretty good. But it has been a long and winding road to improvement,” he admitted. The episode he shared was “a compilation of 30 different upscales from 4 different versions of the video.”

Image credits: Joel Hruska/Paramount

Sounds over the top, yes. But it is also an interesting demonstration of the capabilities and limitations of AI upscaling. The intelligence it has is very small in scale, more concerned with pixels and contours and gradients than the far more subjective qualities of what looks “good” or “natural.” And just like tweaking a photo one way might bring out someone’s eyes but blow out their skin, and another way vice versa, an iterative and multi-layered approach is needed.

The process, then, is far less automated than you might expect — it’s a matter of taste, familiarity with the tech, and serendipity. In other words, it’s an art.

“The more I’ve done, the more I’ve discovered that you can pull detail out of unexpected places,” he said. “You take these different encodes and blend them together, you draw detail out in different ways. One is for sharpness and clarity, the next is for healing some damage, but when you put them on top of each other, what you get is a distinctive version of the original video that emphasizes certain aspects and regresses any damage you did.”

“You’re not supposed to run video through Topaz 17 times; it’s frowned on. But it works! A lot of the old rulebook doesn’t apply,” he said. “If you try to go the simplest route, you will get a playable video but it will have motion errors [i.e. video artifacts]. How much does that bother you? Some people don’t give a shit! But I’m doing this for people like me.”

Like so many passion projects, the audience is limited. “I wish I could release my work, I really do,” Hruska admitted. “But it would paint a target on my back.” For now it’s for him and fellow Trek fans to enjoy in, if not secret, at least plausible deniability.

Real time with Odo

Anyone can see that AI-powered tools and services are trending toward accessibility. The kind of image analysis that Google and Apple once had to do in the cloud can now be done on your phone. Voice synthesis can be done locally as well, and soon we may have ChatGPT-esque conversational AI that doesn’t need to phone home. What fun that will be!

This is enabled by several factors, one of which is more efficient dedicated chips. GPUs have done the job well but were originally designed for something else. Now, small chips are being built from the ground up to perform the kind of math at the heart of many machine learning models, and they are increasingly found in phones, TVs, laptops, you name it.

Real time intelligent image upscaling is neither simple nor easy to do properly, but it is clear to just about everyone in the industry that it’s at least part of the future of digital content.

Imagine the bandwidth savings if Netflix could send a 720p signal that looked 95% as good as a 4K one when your TV upscales it — running the special Netflix algorithms. (In fact Netflix already does something like this, though that’s a story for another time).

Imagine if the latest game always ran at 144 frames per second in 4K, because actually it’s rendered at a lower resolution and intelligently upscaled every 7 microseconds. (That’s what Nvidia envisions with DLSS and other processes its latest cards enable.)

Today, the power to do this is still a little beyond the average laptop or tablet, and even powerful GPUs doing real-time upscaling can produce artifacts and errors due to their more one-size-fits-all algorithms.

Copyright strike me down, and… (you know the rest)

The approach of rolling your own upscaled DS9 (or for that matter Babyon 5, or some other show or film that never got the dignity of a high-definition remaster) is certainly the legal one, or the closest thing to it. But the simple truth is that there is always someone with more time and expertise, who will do the job better — and, sometimes, they’ll even upload the final product to a torrent site.

That’s what actually set me on the path to learning all this — funnily enough, the simplest way to find out if something is available to watch in high quality is often to look at piracy sites, which in many ways are refreshingly straightforward. Searching for a title, year, and quality level (like 1080p or 4K) quickly shows whether it has had a recent, decent release. Whether you then go buy the Blu-ray (increasingly a good investment) or take other measures is between you, god, and your internet provider.

A representative scene, imperfect but better than the original.

I had originally searched for “Deep Space 9 720p,” in my innocence, and saw this AI upscaled version listed. I thought “there’s no way they can put enough lipstick on that pig to…” then I abandoned my metaphor because the download had finished, and I was watching it and making a “not bad” face.

The version I got clocks in at around 400 megabytes per 45-minute episode, low by most standards, and while there are clearly smoothing issues, badly interpolated details, and other issues, it was still worlds ahead of the “official” version. As the quality of the source material improves in later seasons, this contributes to improved upscaling as well. Watching it let me enjoy the show without thinking too much about its format limitations; it appeared more or less as I (wrongly) remember it looking.

There are problems, yes — sometimes detail is lost instead of gained, such as what you see in the header image, the green bokeh being smeared into a glowing line. But in motion and on the whole it’s an improvement, especially in the reduction of digital noise and poorly defined edges. I happily binged five or six episodes, pleasantly surprised.

A day or two later I got an email from my internet provider saying I’d received a DMCA complaint, a copyright strike. In case you were wondering why this post doesn’t have more screenshots.

Now, I would argue that what I did was technically illegal, but not wrong. As a fair-weather Amazon Prime subscriber with a free Paramount+ trial, I had access to those episodes, but in poor quality. Why shouldn’t I, as a matter of fair use, opt for a fan-enhanced version of the content I’m already watching legally? For that matter, why not shows that have had botched remasters, like Buffy the Vampire Slayer (which also can be found upscaled) or shows unavailable due to licensing shenanigans?

Okay, it wouldn’t hold up in court. But I’m hoping history will be my judge, not some ignorant gavel-jockey who thinks AI is what the Fonz said after slapping the jukebox.

The real question, however, is why Paramount, or CBS, and anyone else sitting on properties like DS9 haven’t embraced the potential of intelligent upscaling. It’s gone from highly technical oddity to easily leveraged option, something a handful of smart people could do in a week or two. If some anonymous fan can create the value I experienced with ease (or relative ease — no doubt a fair amount of work went into it), why not professionals?

“I’m friends with VFX people, and there are people that worked on the show that want nothing more than to remaster it. But not all the people at Paramount understand the value of old Trek,” Hruska said.

They should be the ones to do it; they have the knowledge and the expertise. If the studio cared, the way they cared about TNG, they could make something better than anything I could make. But if they don’t care, then the community will always do better.